8 Common Sources Of Formative Assessment Data

contributed by Daniel R. Venables, Founder of Center for Authentic PLCs

What are the most common sources of formative assessment data?

First, let’s look at the purpose of formative assessment. Teachers look at data for one reason: to teach better.

We don’t look at data to compare teachers or students (though this sometimes happens) or to point fingers. Data are tools teachers can use to improve—not weapons to be wielded against them. With this notion in mind, I offer some thoughts about where to find some of the less obvious and often more useful data.

In order to teach better, we must learn where our kids are struggling and where our instruction has been ineffective. Data—at least good data—can help.

It’s tempting and commonplace to think of formative assessment strategies and data only in terms of numbers, tables of numbers, bar graphs, and such. These data tend to be the big data—or what I call macrodata—and very often reflect student performance on end-of-course tests or other high-stakes assessments. While these data are useful in analyzing achievement trends across a class, a grade level of students, or even various student subgroups, they paint the portrait of student achievement in very broad strokes.

Macrodata are great places for teacher teams and PLCs to start reviewing data, but to get a clearer sense of what is happening as our students learn, we need to look closely at the microdata. Herein lies the real clues to identify gaps and fix them before the gaps get too wide. The usefulness of student data is generally proportional to the frequency with which it’s collected.

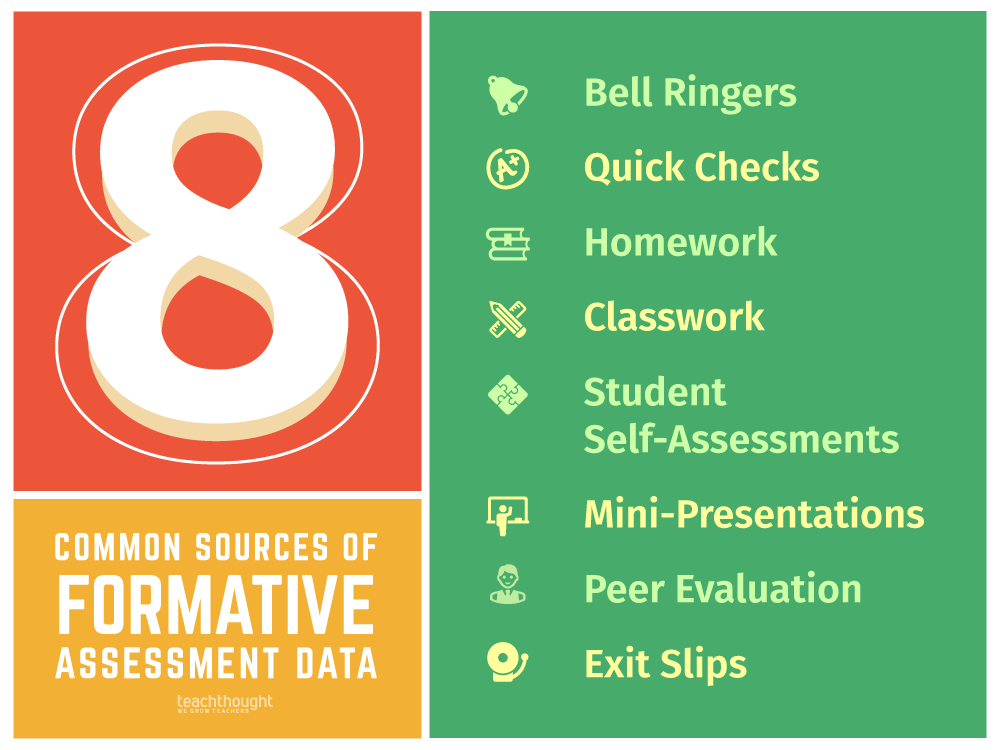

The following eight sources of daily classroom formative assessment data can be invaluable in informing instruction and guiding teachers in making modifications of that instruction.

8 Of The Most Common Sources Of Formative Assessment Data

1. Warm-Ups (Bell Ringers)

Warm-ups are a wonderful source of formative assessment data. Unfortunately, class warm-ups can sometimes be downtime for students while the classroom teacher takes attendance and performs other housekeeping tasks. In these cases, the warm-up activity provides a vague start to class and an opportunity for students to shut down (particularly if the warm-up questions are too difficult), which sets the stage for unproductivity.

In many other cases, however, I have witnessed teachers who used the warm-up questions to provide valuable information about the extent to which students understood yesterday’s work or work from days ago. Teachers in this setting can respond to their students’ successes on the warm-up activity to adjust that day’s instruction.

2. Checks-for-Understanding

Every time teachers ask the class a question during instruction, they receive formative assessment data about where their students are in their learning. But sometimes, the same three or four students answer all of the questions (and are usually right). This can produce skewed data that does not represent the class’s mastery as a whole.

To lessen this effect, many teachers have students respond to global questions using individual student whiteboards, allowing teachers to quickly assess the progress of all students during instruction.

Other teachers have students place Post-ItsTM or magnets on large charts or graphs indicating their understanding or allow them to ask anonymous questions about the content. Using electronic devices, such as clickers, iPads, Kahoot, and even smartphone polls to measure student understanding is particularly effective (and engaging for students) and offers up many useful sources of formative assessment data.

3. Homework

Homework is a time for students to practice and grapple with ideas and skills learned during class time. Meaningful homework assignments can provide teachers with valuable formative assessment data about where students are in their understanding.

There are lots of ways for teachers to assess the results of any given night’s homework, but even if just a sampling is reviewed, they can get a good sense for how students are proceeding in their mastery of the material. Homework that is not meaningful fails to provide the kind of information that can be helpful to teachers instructionally.

4. Classwork

Like homework, work undertaken in class provides lots of information about student mastery. As teachers circulate among students completing classwork, this is a perfect time for teachers to not only assess student understanding but also to determine how effective they found the lesson.

It’s much easier to backpedal during classwork to correct misunderstandings than it is to reteach an entire unit because the teacher found out too late. The classwork itself is among the best sources of formative assessment data a teacher has.

5. Student Self-Assessments

Occasionally during instruction, teachers can poll students to see where they think they are in their understanding, particularly during more complex content instruction. To do this, I prefer heads-down voting so students feel safe to respond honestly and unpressured to vote as their classmates are voting. Heads-down voting involves a show of fingers using the following scale (which I leave on a poster in the classroom):

1 = Totally confused.

2 = Shaky on this.

3 = I think I get this.

4 = Got it. Let’s move on.

6. Mini-Presentations

Another great source of information for teachers can come from mini-presentations, which provide some class time for students to share what it is they’re working on or what they have learned.

These presentations serve three purposes: (1) Students demonstrate their understanding of the content, (2) they see and hear content discussed by their peers often in ways that struggling students understand better (peer paraphrasing), and (3) by providing a public exhibition of mastery, they tend to take the learning task more seriously and do better work.

Keep in mind that these presentations don’t need to reflect lots of material to be effective. In fact, starting small is the best way to go.

7. Peer Evaluation/Scoring

Having students use a rubric to peer-evaluate or score a classmate’s work (particularly a work in progress) serves several objectives: (1) It forces students to become familiar with the language and expectations of rigor embedded into the rubric, (2) it engages students in a conversation about the quality of each other’s work and how to improve it, and (3) it provides teachers with formative data about how students are progressing in their work and in their mastery of the standard before the assignment is due.

8. Tickets-Out-The-Door (Exit Slips)

Want another slightly-simpler source of formative assessment data? Many teachers commonly close their lesson with an exit slip for students to answer. These are typically short questions that reflect the content of that day’s lesson. Sometimes the question prompt has to do with how students feel about the material.

In either case, a thoughtfully crafted question for a ticket-out-the-door provides teachers with a brief check for understanding. The teacher can review these quickly, and useful information can be gleaned about what students really got out of that class. It’s a great way to stack the deck in your favor for tomorrow’s lesson.

Most classroom teachers are familiar with these and other great sources of formative assessment data that may not involve numbers but are invaluable in providing them with useful information about student mastery. These sources of microdata often allow teachers to be proactive in guiding their instruction rather than reactive, as is more typically the case when teachers respond only to macrodata.

Daniel R. Venables is founder and executive director of the Center for Authentic PLCs, an independent consulting firm committed to assisting schools in implementing, developing, and sustaining authentic PLCs. He is the author of How Teachers Can Turn Data Into Action (ASCD, 2014), which presents the Data Action Model, a teacher-friendly, systematic process for reviewing and responding to data in cycles of two to nine weeks. He is also the author of The Practice of Authentic PLCs: A Guide to Effective Teacher Teams (Corwin Press, 2011)