Taking A Look At Teacher Effectiveness Ratings: Part 1

by Grant Wiggins, Authentic Education

Can a chronically ‘failing’ school be inhabited by 100% ‘effective’ teachers?

That’s the question that has caused Governor Cuomo to take a tough stance against the status quo of education in New York (for reasons known only to the Governor). His office recently released a grim document entitled The State of New York’s Failing Schools. The report rhetorically asks and tries to answer the opening question.

I have no comment here on the politics or wisdom of this move by Gov. Cuomo. I’m interested, rather, in a dispassionate consideration of the larger question raised by the report: What is and what ought to be the relationship between teaching ratings and school quality measures?

The gist of the Governor’s Report. Here are the facts that frame the Governor’s report:

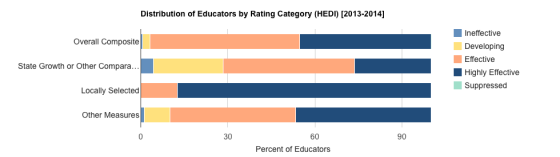

In the 2013-2014 school year, the teacher evaluation system resulted in the following ratings for New York State:

- 95.6 percent of teachers were rated Highly Effective and Effective

- 3.7 percent of teachers were rated Developing

- 0.7 percent of teachers were rated Ineffective

Yet, the report notes, the schools on the watch list are struggling with student achievement and have not shown much improvement over time:

- ELA Proficiency 5.9% (vs. 31.4% statewide)

- Math Proficiency 6.2% (vs. 35.8% statewide)

- Graduation Rate 46.6% (vs. 76.4% statewide)

Here’s the key conclusion in the Governor’s report:

It is incongruous that 99% of teachers were rated effective, while only 35.8 % of our students are proficient in math and 31.4 % in English language arts. How can so many of our teachers be succeeding when so many of our students are struggling?

That is surely a reasonable question; it does seem incongruous on its face. We should all be willing to consider that question, regardless of our feelings about the Governor and the politics of reform.

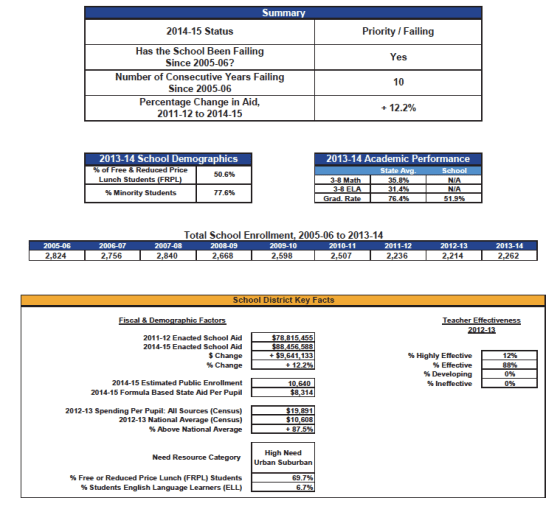

After the general case for pursuing this issue is made in the Cuomo report, each “failing” school is profiled in the Appendix. (The Governor’s Report calls these “failing” schools while the designation actually used by the Department of Education is “Priority” Schools.)

A Closer Look At The High School

Because I am greatly interested in high school reform, I decided to concentrate on that data. This also has the virtue of factoring out the new tough and controversial Common Core exams used in the lower grades because the data for HS is based on the widely-accepted Regents Exam results and Graduation rates.

The failing/priority determination was not made by the Governor’s Office. That designation is based on longstanding NYSED criteria for high schools: adequate growth in the English and Math Performance Index over two years, and a Graduation Rate of at least 60% and growth over two years. Strictly speaking, then, the designation “failing,” in the Cuomo Report should read: “failed to show adequate improvement once targeted as an under-performing school.”

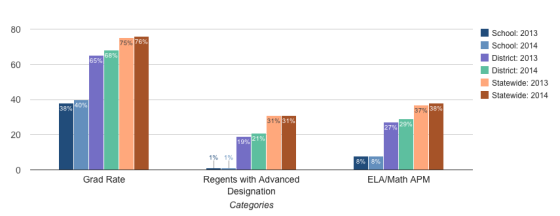

Below is a typical profile from the Governor’s report – one page for each “failing” school. As with many schools on the list, this high school has not made adequate progress over a ten-year period:

Effectiveness Ratings–For The School

Alas, as you see above, the Cuomo report inexplicably only highlights district teacher effectiveness scores, not school scores. (Though, in this case, since this district reports 0% Ineffective and Developing teachers, we can infer that this must be true for this school.) Nor, as you can see, are exam scores given on the report for the HS, just graduation rates. So, to truly make the case the Governor wants to make we need school-based Teacher-Effectiveness ratings and school Regents Exam scores.

Fortunately, with a little digging on the NYSED site, I was able to find all the school data I needed. I picked three struggling high schools from the Cuomo Report list and 3 of the top-rated high schools in New York City to compare. (All six schools are public schools.)

3 key questions before we look at any specific school data, let’s ponder three predictive questions. What do you think?

- Should Teacher Effectiveness ratings in struggling schools in general be lower, equal or higher to such ratings in the most successful high schools?

- In a school that needs to improve and does not, what would be a reasonable expectation for %s in Teacher Effectiveness in the 4 categories of Ineffective, Developing, Effective, Highly Effective?

- Should teacher ratings in the most struggling schools be lower than the district or state average, more or less equal to it, or higher than average? Or should there be no correlations at all?

I think that you’ll be interested in trying to predict the answers and in what I found.

The Data

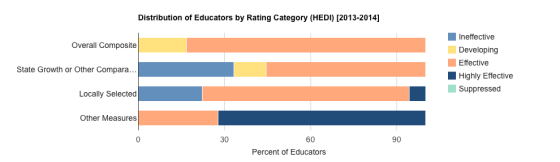

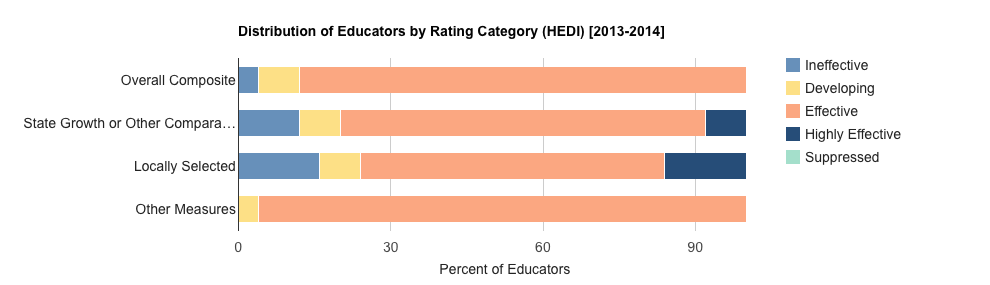

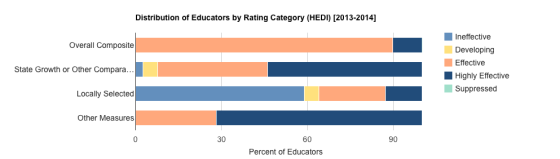

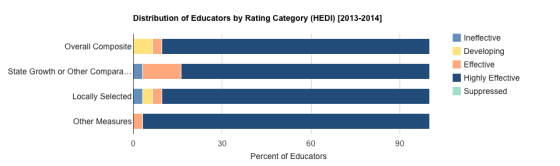

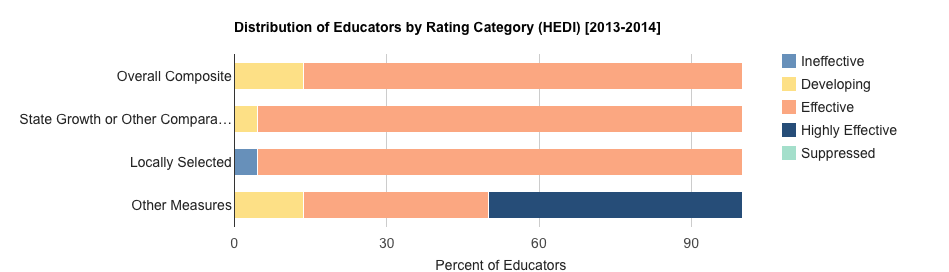

Below are the teacher effectiveness scores from 6 NY public high schools. So: based on the data concerning teacher effectiveness ratings, which high schools are struggling and which are very successful on state outcome measures?

Note that four different ratings make up the total Teacher Effectiveness rating: the composite score, a state-calculated score based on test scores and value-added metrics, and two internally-generated scores. The two scores that are most salient, then, are the bottom two because they are based on locally-assigned ratings by administrators and on locally-developed growth measures proposed by teachers (along with other local measures, in some cases).

From this data on six high schools, then, which would you predict are the three schools that are struggling and which would you predict are the three very successful schools?

Let’s add a bit more suspense before revealing the answer.

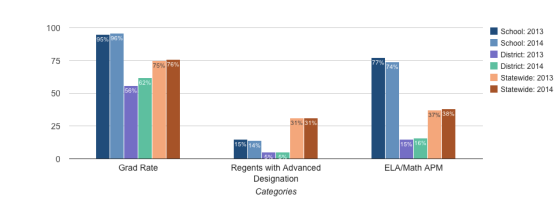

School Performance Data For The Six Schools

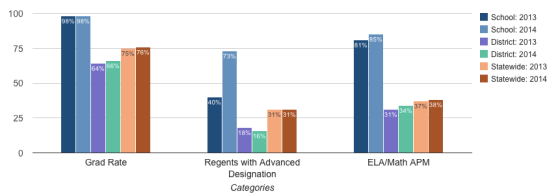

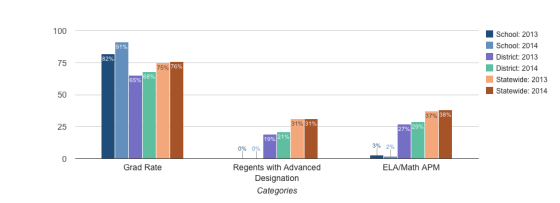

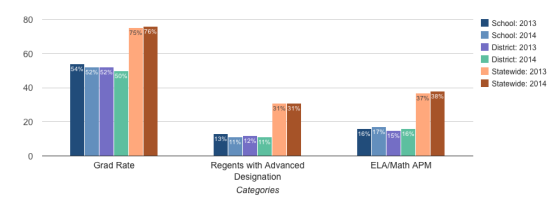

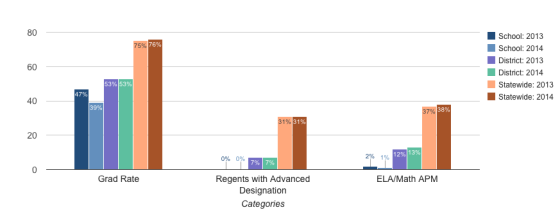

Here is the data for the same high schools on Regents Exams and Graduation Rate trends:

You can thus fairly easily see which data reflect the successful vs. unsuccessful schools in absolute terms – the first three schools in this data. But can you pair each set of performance results with its partner teacher effectiveness data, above?

The Results

Made your predictions? Here are the results:

The first three schools listed in both sets are the successful schools, and the second set of six schools pairs up with its like-numbered partner in the first set. In other words, School #1 in the first list is School #1 in the second list, and Schools 1 -3 are the successful ones.

So, the teachers in the struggling schools are far more highly-rated internally than the teachers in some of New York’s most successful schools. (Two of these successful schools are on many short lists as the best high schools in the City). Indeed, in one of the struggling high schools (School #5), a school that has not made adequate improvement for 10 years, almost all teachers are rated Highly Effective locally!

Tentative Conclusion

From this (limited) data we can infer that in a successful school – whether clearly improving or doing well in absolute terms, on credible exams and client survey results – the local teacher effectiveness ratings are often lower, sometimes far lower, than those provided locally to teachers in failing schools.

So, there would appear to be some merit to the core premise of the Cuomo report, regardless of how mean-spirited the approach feels to many NY educators.

The Next Post

In Part 2, which I will post in a few days, I take a closer look at School #3 on this list – a successful high school in NYC – and compare its data to those from two other high schools in the City. New York City publishes a great deal of accountability data beyond test scores and teacher ratings, including survey data from teachers, students, and parents; and offers an extensive Quality Report for each school, based on site visits. So, we can come away far more confident as to whether the Teacher Effectiveness Ratings have merit or not in City schools.

I will look at the data from the three schools and end with 4 recommendations on how to make the teacher effectiveness ratings more honest and valid – hence, credible.

This post first appeared on Grant’s personal blog; Grant can be found on twitter here; Taking A Closer Look At Teacher Effectiveness Ratings: Part 1; adapted image attribution flickr user tulanepublicrelations