Create More Effective Multiple-Choice Questions With These 10 Tips

contributed by Dr. Stephen Murphy, Measured Progress

One of the critical elements to understanding what students know and are able to do is using spot-on assessment items that provide fair and valid data.

Good data helps teachers make instructional decisions, informing student groupings and identifying needs for additional instruction or stretch strategies for continued learning and growth. To better understand what goes into developing great assessment items, I’ve developed a guide that introduces the basic steps of the process. Here are nine steps, in the order they occur from creation to presentation to the student.

Assessment Quality? Tips For Writing Multiple-Choice Questions

1. Pinpoint the Purpose for Developing the Item

In other words, begin with the end in mind. What new information do I want about the student? What data do I want to collect? What information might I want to report—to the student or parent?

For example, if the goal is to assess grade 3 students’ knowledge of using “multiplication and division within 100 to solve word problems,” the item must be developed and structured to generate specific data about students’ grasp of this content.

2. Determine the Standard(s) to Which the Item Should Align

The purpose of writing an item should be clear; that is, every item should be written to a specific standard that you are interested in assessing. If an educator is interested in knowing whether a student has retained the desired understanding of a specific series of lessons, each item could be written to address one standard from this sequence of instruction.

It’s important for the item author to have clear expectations of the knowledge, skills, and abilities a student must have to demonstrate success with a particular standard.

See also 40 Ways To Improve A Test, Quiz, Or Other Assessment

3. Identify the Appropriate Structure for the Item

The most common structure for items is multiple-choice (MC), consisting of an item ‘stem’ that provides the information necessary to elicit a response, and a number of response options from which the student selects. Typical items for grades 3 through 8 have four response options and a single ‘key,’ or correct response.

In some instances, the content standards require more complex item types such as constructed-response or technology-enhanced items, or direct writing prompts. In choosing the item type, you need to identify the type of response that will allow students to demonstrate their understanding of the content, and determine the resulting scoring rules.

4. Target the item to your state’s Achievement Level Descriptors

Achievement Level Descriptors (ALDs, also called Performance Level Descriptors or Proficiency Level Descriptors) describe what students should know and be able to accomplish to be classified into a meaningful level of achievement. Targeting your items to ALDs can help you address a range of difficulty and give you an idea of what level your students might achieve.

For example, eMPower Assessments™ by Measured Progress have four achievement levels: Below Basic, Basic, Proficient, and Advanced. An item aligned to Proficient would be one that students in Proficient and Advanced should get correct, but not students who have only a basic or below basic understanding of the content.

In general, the verbs we use suggest the level of complexity of an item. For example, items that target advanced skills might ask students to analyze, construct, or interpret. To target proficiency, items might ask students to describe or represent.

At the basic level, students recall and compute. In eMPower, a college and career readiness assessment, students who are proficient in mathematics by the end of grade 4 can “solve multi-step mathematical problems … represent and compare fractions … identify and describe geometric properties … [and] use models to represent and solve nonstandard problems…”

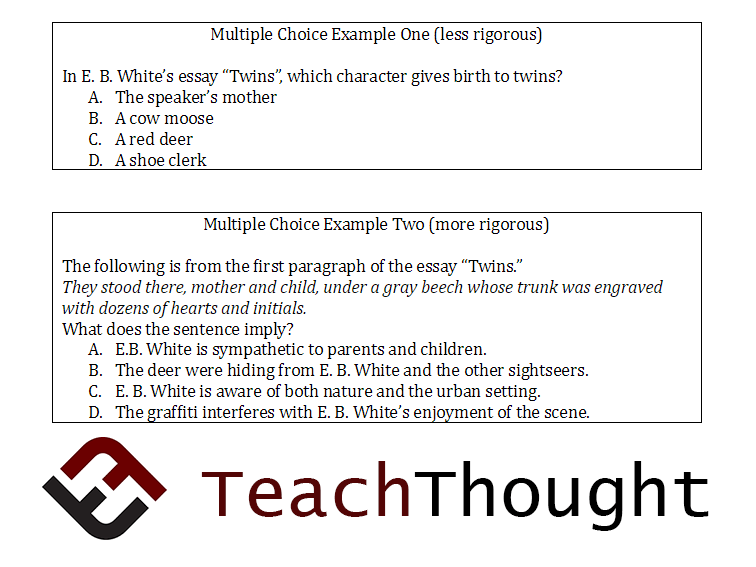

5. Create Strategic Multiple-Choice Questions

MC items may seem simple but they need careful development and can provide significant insight if the distractors are strong. Good distractors are response options that reflect common errors in student thinking and therefore are plausible options for students to select if they don’t understand the content being assessed.

Effective distractors help detect students’ specific knowledge gaps. Used in formative practices, these items can help teachers focus instruction and bridge gaps. One approach to creating good distractors is to start with common misconceptions and create options that represent them. Finally, make sure that the wording of the item and distractors doesn’t create a second plausible correct answer.

6. Eliminate Barriers for Students

To provide all students with the best opportunity to demonstrate their mastery of the content standard being assessed, items must be free of bias or sensitive issues that might detract from a student’s ability to engage in the item.

In addition, the item must be written to the specific targeted grade and vocabulary level and be clear and concise, avoiding unnecessary reading burden where reading is not being assessed.

7. Include all Necessary Supports for Generating a Response

While this might seem obvious, it’s important to include all the tools and information that a student needs to interpret and respond to an item. This applies to all item types. Here are some examples.

Does the item require a stimulus, a passage, or a graphic? If so, is all the necessary information provided, and is it appropriate and accessible to the student? Passages should reflect appropriate vocabulary and text complexity. Graphics need to be clear and should not include extraneous information.

If the mathematic content standard allows a student to use a calculator, that must be evident, and the appropriate tool must be available to the student.

8. Use Engaging Material

Students are more motivated to respond carefully if the items are based on contexts students are likely to encounter in their day-to-day lives. If using graphics or illustrations make sure they are appropriate for the grade level and familiar to students from the world around them.

The contexts should be authentic—not far-fetched or unlikely.

9. Make Sure the Students’ Responses will Provide the Right Kind of Information

Time is a precious commodity for educators, so it is important to start this checklist (and now conclude it) with the end in mind. As you plan and write items, continue to reference them against the kinds of information you need. Especially for formative assessment practices, focus on identifying students’ strengths and needs, and on using the items to help you adjust instruction.

BONUS STEP: Implement Quality Review.

Once the items are written, undertake these quality checks.

1. Have another grade/content expert confirm that the content (and the identification of a single correct option for MC items) is correct. This reviewer can solve each problem and identify any gaps or errors.

2. Have someone you trust as an editor make sure that the grammar is correct and the wording is appropriate.

3. Finally, conduct a review of each item in its final published form to verify that it has all come together correctly and is something a student can indeed respond to.

Dr. Stephen Murphy is Vice President, Measurement Services, at Measured Progress. He leads the groups responsible for all aspects of assessment design and development, including psychometrics, content development, publishing, and content management.

Assessment Quality? 9 Steps To Creating Effective Multiple-Choice Questions